RMSProp (C2W2L07)

Take the Deep Learning Specialization: http://bit.ly/2PFq843

Check out all our courses: https://www.deeplearning.ai

Subscribe to The Batch, our weekly newsletter: https://www.deeplearning.ai/thebatch

Follow us:

Twitter: https://twitter.com/deeplearningai_

Facebook: https://www.facebook.com/deeplearningHQ/

Linkedin: https://www.linkedin.com/company/deeplearningai

Видео RMSProp (C2W2L07) канала DeepLearningAI

Check out all our courses: https://www.deeplearning.ai

Subscribe to The Batch, our weekly newsletter: https://www.deeplearning.ai/thebatch

Follow us:

Twitter: https://twitter.com/deeplearningai_

Facebook: https://www.facebook.com/deeplearningHQ/

Linkedin: https://www.linkedin.com/company/deeplearningai

Видео RMSProp (C2W2L07) канала DeepLearningAI

Показать

Комментарии отсутствуют

Информация о видео

Другие видео канала

Gradient Descent With Momentum (C2W2L06)

Gradient Descent With Momentum (C2W2L06) Exponentially Weighted Averages (C2W2L03)

Exponentially Weighted Averages (C2W2L03) Tutorial 16- AdaDelta and RMSprop optimizer

Tutorial 16- AdaDelta and RMSprop optimizer

Adagrad and RMSProp Intuition| How Adagrad and RMSProp optimizer work in deep learning

Adagrad and RMSProp Intuition| How Adagrad and RMSProp optimizer work in deep learning Understanding Mini-Batch Gradient Dexcent (C2W2L02)

Understanding Mini-Batch Gradient Dexcent (C2W2L02) Lecture 7 | Training Neural Networks II

Lecture 7 | Training Neural Networks II RMSProp Optimization from Scratch in Python

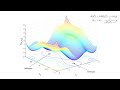

RMSProp Optimization from Scratch in Python Tom Goldstein: "What do neural loss surfaces look like?"

Tom Goldstein: "What do neural loss surfaces look like?" Lecture 10 | Recurrent Neural Networks

Lecture 10 | Recurrent Neural Networks The Evolution of Gradient Descent

The Evolution of Gradient Descent TensorFlow (C2W3L11)

TensorFlow (C2W3L11) Adam Optimization Algorithm (C2W2L08)

Adam Optimization Algorithm (C2W2L08) 23. Accelerating Gradient Descent (Use Momentum)

23. Accelerating Gradient Descent (Use Momentum) Lecture 11 | Detection and Segmentation

Lecture 11 | Detection and Segmentation C4W1L03 More Edge Detection

C4W1L03 More Edge Detection Lecture 12 | Visualizing and Understanding

Lecture 12 | Visualizing and Understanding Mini Batch Gradient Descent (C2W2L01)

Mini Batch Gradient Descent (C2W2L01) Lecture 9 | CNN Architectures

Lecture 9 | CNN Architectures