MuZero - Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model

https://arxiv.org/abs/1911.08265

Constructing agents with planning capabilities has long been one of the main challenges in the pursuit of artificial intelligence. Tree-based planning methods have enjoyed huge success in challenging domains, such as chess and Go, where a perfect simulator is available. However, in real-world problems the dynamics governing the environment are often complex and unknown. In this work we present the MuZero algorithm which, by combining a tree-based search with a learned model, achieves superhuman performance in a range of challenging and visually complex domains, without any knowledge of their underlying dynamics. MuZero learns a model that, when applied iteratively, predicts the quantities most directly relevant to planning: the reward, the action-selection policy, and the value function. When evaluated on 57 different Atari games - the canonical video game environment for testing AI techniques, in which model-based planning approaches have historically struggled - our new algorithm achieved a new state of the art. When evaluated on Go, chess and shogi, without any knowledge of the game rules, MuZero matched the superhuman performance of the AlphaZero algorithm that was supplied with the game rules.

Видео MuZero - Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model канала Julian Schrittwieser

Constructing agents with planning capabilities has long been one of the main challenges in the pursuit of artificial intelligence. Tree-based planning methods have enjoyed huge success in challenging domains, such as chess and Go, where a perfect simulator is available. However, in real-world problems the dynamics governing the environment are often complex and unknown. In this work we present the MuZero algorithm which, by combining a tree-based search with a learned model, achieves superhuman performance in a range of challenging and visually complex domains, without any knowledge of their underlying dynamics. MuZero learns a model that, when applied iteratively, predicts the quantities most directly relevant to planning: the reward, the action-selection policy, and the value function. When evaluated on 57 different Atari games - the canonical video game environment for testing AI techniques, in which model-based planning approaches have historically struggled - our new algorithm achieved a new state of the art. When evaluated on Go, chess and shogi, without any knowledge of the game rules, MuZero matched the superhuman performance of the AlphaZero algorithm that was supplied with the game rules.

Видео MuZero - Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model канала Julian Schrittwieser

Показать

Комментарии отсутствуют

Информация о видео

Другие видео канала

MuZero - ICAPS 2020

MuZero - ICAPS 2020 DeepMind Made A Superhuman AI For 57 Atari Games! 🕹

DeepMind Made A Superhuman AI For 57 Atari Games! 🕹 MuZero: Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model

MuZero: Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model Deepmind AlphaZero - Mastering Games Without Human Knowledge

Deepmind AlphaZero - Mastering Games Without Human Knowledge AlphaGo - The Movie | Full Documentary

AlphaGo - The Movie | Full Documentary David Silver: AlphaGo, AlphaZero, and Deep Reinforcement Learning | Lex Fridman Podcast #86

David Silver: AlphaGo, AlphaZero, and Deep Reinforcement Learning | Lex Fridman Podcast #86 AlphaZero: Shedding new light on the grand games of chess, shogi and Go

AlphaZero: Shedding new light on the grand games of chess, shogi and Go "Exactly How to Attack" | DeepMind's AlphaZero vs. Stockfish

"Exactly How to Attack" | DeepMind's AlphaZero vs. Stockfish Julian Schrittwieser – MuZero, Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model

Julian Schrittwieser – MuZero, Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model Can we build human-like AI? Should we? | Harri Valpola | TEDxHelsinkiUniversity

Can we build human-like AI? Should we? | Harri Valpola | TEDxHelsinkiUniversity Google Deepmind's AlphaZero Chess Engine Makes "Inhuman" Knight Sacrifice

Google Deepmind's AlphaZero Chess Engine Makes "Inhuman" Knight Sacrifice AI vs. Human: The Greatest Go Tournament Ever

AI vs. Human: The Greatest Go Tournament Ever Deep Mind AI Alpha Zero Sacrifices a Pawn and Cripples Stockfish for the Entire Game

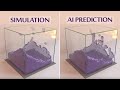

Deep Mind AI Alpha Zero Sacrifices a Pawn and Cripples Stockfish for the Entire Game How Well Can an AI Learn Physics? ⚛

How Well Can an AI Learn Physics? ⚛ Charles Blundell - Agent57: Outperforming the Atari Human Benchmark

Charles Blundell - Agent57: Outperforming the Atari Human Benchmark CS 181V Reinforcement Learning—Lecture 25(HMC Spring 2020): AlphaGo Zero, Alpha Zero, and Mu Zero

CS 181V Reinforcement Learning—Lecture 25(HMC Spring 2020): AlphaGo Zero, Alpha Zero, and Mu Zero MuZero: DeepMind’s New AI Mastered More Than 50 Games

MuZero: DeepMind’s New AI Mastered More Than 50 Games AlphaGo Zero Tutorial Part 1 - Overview

AlphaGo Zero Tutorial Part 1 - Overview This Superhuman Poker AI Was Trained in 20 Hours

This Superhuman Poker AI Was Trained in 20 Hours Why did Lee Sedol, one of the world’s best ‘Go’ players, retire from the game?

Why did Lee Sedol, one of the world’s best ‘Go’ players, retire from the game?