You Should Be Using Automatic Differentiation

WANT TO EXPERIENCE A TALK LIKE THIS LIVE?

Barcelona: https://www.datacouncil.ai/barcelona

New York City: https://www.datacouncil.ai/new-york-city

San Francisco: https://www.datacouncil.ai/san-francisco

Singapore: https://www.datacouncil.ai/singapore

Ryan Adams is a machine learning researcher at Twitter and a professor of computer science at Harvard. He co-founded Whetlab, a machine learning startup that was acquired by Twitter in 2015. He co-hosts the Talking Machines podcast.

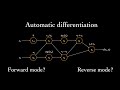

A big part of machine learning is optimization of continuous functions. Whether for deep neural networks, structured prediction, or variational inference, machine learners spend a lot of time taking gradients and verifying them. It turns out, however, that computers are good at doing this kind of calculus automatically, and automatic differentiation tools are becoming more mainstream and easier to use. In his talk, Adams will give an overview of automatic differentiation, with a particular focus on Autograd. I will also give several vignettes about using Autograd to learn hyperparameters in neural networks, perform variational inference, and design new organic molecules.

FOLLOW DATA COUNCIL:

Twitter: https://twitter.com/DataCouncilAI

LinkedIn: https://www.linkedin.com/company/datacouncil-ai

Facebook: https://www.facebook.com/datacouncilai

Видео You Should Be Using Automatic Differentiation канала Data Council

Barcelona: https://www.datacouncil.ai/barcelona

New York City: https://www.datacouncil.ai/new-york-city

San Francisco: https://www.datacouncil.ai/san-francisco

Singapore: https://www.datacouncil.ai/singapore

Ryan Adams is a machine learning researcher at Twitter and a professor of computer science at Harvard. He co-founded Whetlab, a machine learning startup that was acquired by Twitter in 2015. He co-hosts the Talking Machines podcast.

A big part of machine learning is optimization of continuous functions. Whether for deep neural networks, structured prediction, or variational inference, machine learners spend a lot of time taking gradients and verifying them. It turns out, however, that computers are good at doing this kind of calculus automatically, and automatic differentiation tools are becoming more mainstream and easier to use. In his talk, Adams will give an overview of automatic differentiation, with a particular focus on Autograd. I will also give several vignettes about using Autograd to learn hyperparameters in neural networks, perform variational inference, and design new organic molecules.

FOLLOW DATA COUNCIL:

Twitter: https://twitter.com/DataCouncilAI

LinkedIn: https://www.linkedin.com/company/datacouncil-ai

Facebook: https://www.facebook.com/datacouncilai

Видео You Should Be Using Automatic Differentiation канала Data Council

Показать

Комментарии отсутствуют

Информация о видео

Другие видео канала

The Simple Essence of Automatic Differentiation - Conal Elliott

The Simple Essence of Automatic Differentiation - Conal Elliott Jarrett Revels: Forward-Mode Automatic Differentiation in Julia

Jarrett Revels: Forward-Mode Automatic Differentiation in Julia Beyond Deep Learning - Differentiable Programming with Flux - Avik Sengupta | ODSC Europe 2019

Beyond Deep Learning - Differentiable Programming with Flux - Avik Sengupta | ODSC Europe 2019 But what is a Neural Network? | Deep learning, chapter 1

But what is a Neural Network? | Deep learning, chapter 1 Deep Learning 2: Introduction to TensorFlow

Deep Learning 2: Introduction to TensorFlow Machine Learning with TensorFlow (GDD Europe '17)

Machine Learning with TensorFlow (GDD Europe '17) What is Automatic Differentiation?

What is Automatic Differentiation? Automatic Differentiation - A Revisionist History and the State of the Art - AD meets SDG and PLT

Automatic Differentiation - A Revisionist History and the State of the Art - AD meets SDG and PLT JAX: Accelerated Machine Learning Research | SciPy 2020 | VanderPlas

JAX: Accelerated Machine Learning Research | SciPy 2020 | VanderPlas Automatic differentiation in Ruby

Automatic differentiation in Ruby TensorFlow and deep reinforcement learning, without a PhD (Google I/O '18)

TensorFlow and deep reinforcement learning, without a PhD (Google I/O '18) Keynote: Automatic Differentiation for Dummies

Keynote: Automatic Differentiation for Dummies AD-OCaml: Algorithmic Differentiation for OCaml

AD-OCaml: Algorithmic Differentiation for OCaml Machine Learning & Artificial Intelligence: Crash Course Computer Science #34

Machine Learning & Artificial Intelligence: Crash Course Computer Science #34 Talk: Colin Carroll - Getting started with automatic differentiation

Talk: Colin Carroll - Getting started with automatic differentiation Derivative of a Matrix : Data Science Basics

Derivative of a Matrix : Data Science Basics Autograd in Pytorch

Autograd in Pytorch Swift for TensorFlow (Google I/O'19)

Swift for TensorFlow (Google I/O'19) Petros Koumoutsakos: "Machine Learning for Fluid Mechanics"

Petros Koumoutsakos: "Machine Learning for Fluid Mechanics" Phillip Carter- The Many Paths Towards Functional Programming Language Adoption- λC20 Global Edition

Phillip Carter- The Many Paths Towards Functional Programming Language Adoption- λC20 Global Edition