Disambiguating Monocular Depth Estimation with a Single Transient (ECCV 2020)

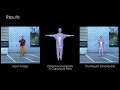

Monocular depth estimation algorithms successfully predict the relative depth order of objects in a scene. However, because of the fundamental scale ambiguity associated with monocular images, these algorithms fail at correctly predicting true metric depth. In this work, we demonstrate how a depth histogram of the scene, which can be readily captured using a single-pixel time-resolved detector, can be fused with the output of existing monocular depth estimation algorithms to resolve the depth ambiguity problem. We validate this novel sensor fusion technique experimentally and in extensive simulation. We show that it significantly improves the performance of several state-of-the-art monocular depth estimation algorithms.

Видео Disambiguating Monocular Depth Estimation with a Single Transient (ECCV 2020) канала Stanford Computational Imaging Lab

Видео Disambiguating Monocular Depth Estimation with a Single Transient (ECCV 2020) канала Stanford Computational Imaging Lab

Показать

Комментарии отсутствуют

Информация о видео

19 июля 2020 г. 4:17:11

00:05:47

Другие видео канала

Object Depth from Motion and Segmentation: ECCV 2020 Presentation

Object Depth from Motion and Segmentation: ECCV 2020 Presentation Consistent Video Depth Estimation

Consistent Video Depth Estimation CVPR 2020: D3S - A Discriminative Single Shot Segmentation Tracker

CVPR 2020: D3S - A Discriminative Single Shot Segmentation Tracker Single-Photon 3D Imaging with Deep Sensor Fusion | SIGGRAPH 2018

Single-Photon 3D Imaging with Deep Sensor Fusion | SIGGRAPH 2018 Stanford Computational Imaging Lab - Overview 06/2020

Stanford Computational Imaging Lab - Overview 06/2020 DeepCap: Monocular Human Performance Capture Using Weak Supervision (CVPR 2020) - Oral

DeepCap: Monocular Human Performance Capture Using Weak Supervision (CVPR 2020) - Oral DeepFit : 3D Surface Fitting via Neural Network Weighted Least Squares (ECCV 2020 Oral)

DeepFit : 3D Surface Fitting via Neural Network Weighted Least Squares (ECCV 2020 Oral) Unsupervised Monocular Depth Estimation With Left-Right Consistency

Unsupervised Monocular Depth Estimation With Left-Right Consistency Real-Time 3D Reconstruction and 6-DoF Tracking with an Event Camera

Real-Time 3D Reconstruction and 6-DoF Tracking with an Event Camera Wave-Based Non-Line-of-Sight Imaging using Fast f–k Migration | SIGGRAPH 2019

Wave-Based Non-Line-of-Sight Imaging using Fast f–k Migration | SIGGRAPH 2019 World-Consistent Video-to-Video Synthesis (ECCV 2020)

World-Consistent Video-to-Video Synthesis (ECCV 2020) End-to-end Optimization of Optics and Image Processing | SIGGRAPH 2018

End-to-end Optimization of Optics and Image Processing | SIGGRAPH 2018 Implicit Neural Representations with Periodic Activation Functions

Implicit Neural Representations with Periodic Activation Functions Holographic Near-Eye Displays Based on Overlap-Add Stereograms

Holographic Near-Eye Displays Based on Overlap-Add Stereograms Autofocals: Evaluating Gaze-Contingent Eyeglasses for Presbyopes

Autofocals: Evaluating Gaze-Contingent Eyeglasses for Presbyopes Gaze-contingent Stereo Rendering for VR/AR | SIGGRAPH Asia 2020

Gaze-contingent Stereo Rendering for VR/AR | SIGGRAPH Asia 2020 Contact and Human Dynamics from Monocular Video (ECCV 2020)

Contact and Human Dynamics from Monocular Video (ECCV 2020)![[CVPR 2020] Simple but Effective Image Enhancement Techniques](https://i.ytimg.com/vi/jofNIRZmREY/default.jpg) [CVPR 2020] Simple but Effective Image Enhancement Techniques

[CVPR 2020] Simple but Effective Image Enhancement Techniques A U-Net Based Discriminator for Generative Adversarial Networks, CVPR 2020 (10 min overview)

A U-Net Based Discriminator for Generative Adversarial Networks, CVPR 2020 (10 min overview) SNE-RoadSeg (ECCV 2020)

SNE-RoadSeg (ECCV 2020)