Cross-Task Generalization via Natural Language Crowdsourcing Instructions

This video explains the paper "https://arxiv.org/abs/2104.08773".

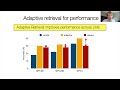

Abstract: Humans (e.g., crowdworkers) have a remarkable ability in solving different tasks, by simply reading textual instructions that define them and looking at a few examples. Despite the success of the conventional supervised learning on individual datasets, such models often struggle with generalization across tasks (e.g., a question-answering system cannot solve classification tasks). A long-standing challenge in AI is to build a model that learns a new task by understanding the human-readable instructions that define it. To study this, we introduce NATURAL INSTRUCTIONS, a dataset of 61 distinct tasks, their human-authored instructions, and 193k task instances (input-output pairs). The instructions are obtained from crowdsourcing instructions used to create existing NLP datasets and mapped to a unified schema. Using this meta-dataset, we measure cross-task generalization by training models on seen tasks and measuring generalization to the remaining unseen ones. We adopt generative pre-trained language models to encode task-specific instructions along with input and generate task output. Our results indicate that models benefit from instructions when evaluated in terms of generalization to unseen tasks (19% better for models utilizing instructions). These models, however, are far behind an estimated performance upperbound indicating significant room for more progress in this direction.

Видео Cross-Task Generalization via Natural Language Crowdsourcing Instructions канала Allen Institute for AI

Abstract: Humans (e.g., crowdworkers) have a remarkable ability in solving different tasks, by simply reading textual instructions that define them and looking at a few examples. Despite the success of the conventional supervised learning on individual datasets, such models often struggle with generalization across tasks (e.g., a question-answering system cannot solve classification tasks). A long-standing challenge in AI is to build a model that learns a new task by understanding the human-readable instructions that define it. To study this, we introduce NATURAL INSTRUCTIONS, a dataset of 61 distinct tasks, their human-authored instructions, and 193k task instances (input-output pairs). The instructions are obtained from crowdsourcing instructions used to create existing NLP datasets and mapped to a unified schema. Using this meta-dataset, we measure cross-task generalization by training models on seen tasks and measuring generalization to the remaining unseen ones. We adopt generative pre-trained language models to encode task-specific instructions along with input and generate task output. Our results indicate that models benefit from instructions when evaluated in terms of generalization to unseen tasks (19% better for models utilizing instructions). These models, however, are far behind an estimated performance upperbound indicating significant room for more progress in this direction.

Видео Cross-Task Generalization via Natural Language Crowdsourcing Instructions канала Allen Institute for AI

Показать

Комментарии отсутствуют

Информация о видео

Другие видео канала

Text Modular Networks: Learning to Decompose Tasks in the Language of Existing Models

Text Modular Networks: Learning to Decompose Tasks in the Language of Existing Models Visual Reaction

Visual Reaction Horacio Saggion: Mining and Enriching Scientific Text Collections

Horacio Saggion: Mining and Enriching Scientific Text Collections Ajay Nagesh: Exploring Relational Features and Learning

Ajay Nagesh: Exploring Relational Features and Learning Learning for Never-before-seen Biomedicine

Learning for Never-before-seen Biomedicine Just-DREAM-about-it: Figurative Language Understanding with DREAM-FLUTE

Just-DREAM-about-it: Figurative Language Understanding with DREAM-FLUTE Rishabh Iyer: Submodular Optimization and Data Summarization with Applications to Computer Vision

Rishabh Iyer: Submodular Optimization and Data Summarization with Applications to Computer Vision Kevin Gimpel: From Paraphrase Modeling to Controlled Generation

Kevin Gimpel: From Paraphrase Modeling to Controlled Generation Applied AI in High-Expertise Settings, or Curation as Programming

Applied AI in High-Expertise Settings, or Curation as Programming From 'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project | AI2

From 'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project | AI2 When Not to Trust Language Models: Investigating Effectiveness of Parametric&Non-Parametric Memories

When Not to Trust Language Models: Investigating Effectiveness of Parametric&Non-Parametric Memories Visual Room Rearrangement (CVPR 2021)

Visual Room Rearrangement (CVPR 2021) Adapting to Long Tail Domains: A Case Study in Clinical Information | AI2

Adapting to Long Tail Domains: A Case Study in Clinical Information | AI2 Kenneth D. Forbus: Multimodal Science Learning

Kenneth D. Forbus: Multimodal Science Learning Daniel Khashabi - Natural Language Understanding with Indirect Supervision

Daniel Khashabi - Natural Language Understanding with Indirect Supervision Jesse Dodge: Open Loop Hyperparameter Optimization and Determinantal Point Processes

Jesse Dodge: Open Loop Hyperparameter Optimization and Determinantal Point Processes Dr. Asma Ben Abacha: Medical Question Answering

Dr. Asma Ben Abacha: Medical Question Answering Towards Teachable Reasoning Systems: Using a Dynamic Memory of User Feedback for System Improvement

Towards Teachable Reasoning Systems: Using a Dynamic Memory of User Feedback for System Improvement Robot Learning by Understanding Egocentric Videos

Robot Learning by Understanding Egocentric Videos Explaining Answers with Entailment Trees

Explaining Answers with Entailment Trees