Neural Ordinary Differential Equations - part 1 (algorithm review) | AISC

Toronto Deep Learning Series, 14-Jan-2019

https://tdls.a-i.science/events/2019-01-14

Paper: https://arxiv.org/abs/1806.07366

Discussion Panel: Jodie Zhu, Helen Ngo, Lindsay Brin

Host: SAS Institute Canada

NEURAL ORDINARY DIFFERENTIAL EQUATIONS

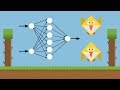

We introduce a new family of deep neural network models. Instead of specifying a discrete sequence of hidden layers, we parameterize the derivative of the hidden state using a neural network. The output of the network is computed using a black-box differential equation solver. These continuous-depth models have constant memory cost, adapt their evaluation strategy to each input, and can explicitly trade numerical precision for speed. We demonstrate these properties in continuous-depth residual networks and continuous-time latent variable models. We also construct continuous normalizing flows, a generative model that can train by maximum likelihood, without partitioning or ordering the data dimensions. For training, we show how to scalably backpropagate through any ODE solver, without access to its internal operations. This allows end-to-end training of ODEs within larger models.

Видео Neural Ordinary Differential Equations - part 1 (algorithm review) | AISC канала ML Explained - A.I. Socratic Circles - AISC

https://tdls.a-i.science/events/2019-01-14

Paper: https://arxiv.org/abs/1806.07366

Discussion Panel: Jodie Zhu, Helen Ngo, Lindsay Brin

Host: SAS Institute Canada

NEURAL ORDINARY DIFFERENTIAL EQUATIONS

We introduce a new family of deep neural network models. Instead of specifying a discrete sequence of hidden layers, we parameterize the derivative of the hidden state using a neural network. The output of the network is computed using a black-box differential equation solver. These continuous-depth models have constant memory cost, adapt their evaluation strategy to each input, and can explicitly trade numerical precision for speed. We demonstrate these properties in continuous-depth residual networks and continuous-time latent variable models. We also construct continuous normalizing flows, a generative model that can train by maximum likelihood, without partitioning or ordering the data dimensions. For training, we show how to scalably backpropagate through any ODE solver, without access to its internal operations. This allows end-to-end training of ODEs within larger models.

Видео Neural Ordinary Differential Equations - part 1 (algorithm review) | AISC канала ML Explained - A.I. Socratic Circles - AISC

Показать

Комментарии отсутствуют

Информация о видео

4 февраля 2019 г. 19:58:34

00:24:35

Другие видео канала

Neural Ordinary Differential Equations - part 2 (results & discussion) | AISC

Neural Ordinary Differential Equations - part 2 (results & discussion) | AISC Neural Differential Equations

Neural Differential Equations Applying Deep Reinforcement Learning to Trading with Dr. Tucker Balch

Applying Deep Reinforcement Learning to Trading with Dr. Tucker Balch Physics-informed Neural Networks - Towards solving Navier-Stokes equations (r200526)

Physics-informed Neural Networks - Towards solving Navier-Stokes equations (r200526) Neural Ordinary Differential Equations

Neural Ordinary Differential Equations Neural Ordinary Differential Equations - Best Paper Awards NeurIPS 2018

Neural Ordinary Differential Equations - Best Paper Awards NeurIPS 2018 First order, Ordinary Differential Equations.

First order, Ordinary Differential Equations. David Duvenaud | Reflecting on Neural ODEs | NeurIPS 2019

David Duvenaud | Reflecting on Neural ODEs | NeurIPS 2019 Machine Learning for Flappy Bird using Neural Network & Genetic Algorithm

Machine Learning for Flappy Bird using Neural Network & Genetic Algorithm David Duvenaud: Neural Ordinary Equations

David Duvenaud: Neural Ordinary Equations Lars Ruthotto: "Deep Neural Networks Motivated By Differential Equations (Part 1/2)"

Lars Ruthotto: "Deep Neural Networks Motivated By Differential Equations (Part 1/2)" Attention in Neural Networks

Attention in Neural Networks Differential equation introduction | First order differential equations | Khan Academy

Differential equation introduction | First order differential equations | Khan Academy JuliaCon 2019 | Neural Ordinary Differential Equations with DiffEqFlux | Jesse Bettencourt

JuliaCon 2019 | Neural Ordinary Differential Equations with DiffEqFlux | Jesse Bettencourt "Machine Learning for Partial Differential Equations" by Michael Brenner

"Machine Learning for Partial Differential Equations" by Michael Brenner Application 4 - Solution of PDE/ODE using Neural Networks

Application 4 - Solution of PDE/ODE using Neural Networks Generative Adversarial Networks - FUTURISTIC & FUN AI !

Generative Adversarial Networks - FUTURISTIC & FUN AI ! Solve Differential Equations in Python

Solve Differential Equations in Python What is The Schrödinger Equation, Exactly?

What is The Schrödinger Equation, Exactly?